Using AI Hallucinations to Evaluate Image Realism

New research from Russia proposes an unconventional method to detect unrealistic AI-generated images – not by improving the accuracy of large vision-language models (LVLMs), but by intentionally leveraging their tendency to hallucinate. The novel approach extracts multiple ‘atomic facts' about an image using LVLMs, then applies natural language inference (NLI) to systematically measure contradictions among […]<br /> The post Using AI Hallucinations to Evaluate Image Realism appeared first on Unite.AI. [...]

Rating

Innovation

Pricing

Technology

Usability

We have discovered similar tools to what you are looking for. Check out our suggestions for similar AI tools.

Open source Qwen-Image-2512 launches to compete with Google's Nano Banana Pro in high quality AI image generation

When Google released its newest AI image model Nano Banana Pro (aka Gemini 3 Pro Image) in November, it reset expectations for the entire field. For the first time, uses of an image model could use na [...]

GPT-5.3 Instant cuts hallucinations by 26.8% as OpenAI shifts focus from speed to accuracy

OpenAI's GPT-5.3 Instant — the company's most widely used model — reduces hallucinations by up to 26.8% compared to its predecessor, prioritizing accuracy and conversational reliability [...]

Lean4: How the theorem prover works and why it's the new competitive edge in AI

Large language models (LLMs) have astounded the world with their capabilities, yet they remain plagued by unpredictability and hallucinations – confidently outputting incorrect information. In high- [...]

Ideogram 3.0's AI image generator gets a realism boost and new editing tools

Ideogram's AI image generator gets a major upgrade, promising more realistic images, a wider range of styles, and a set of new editing tools. Developers can now tap into these features through a [...]

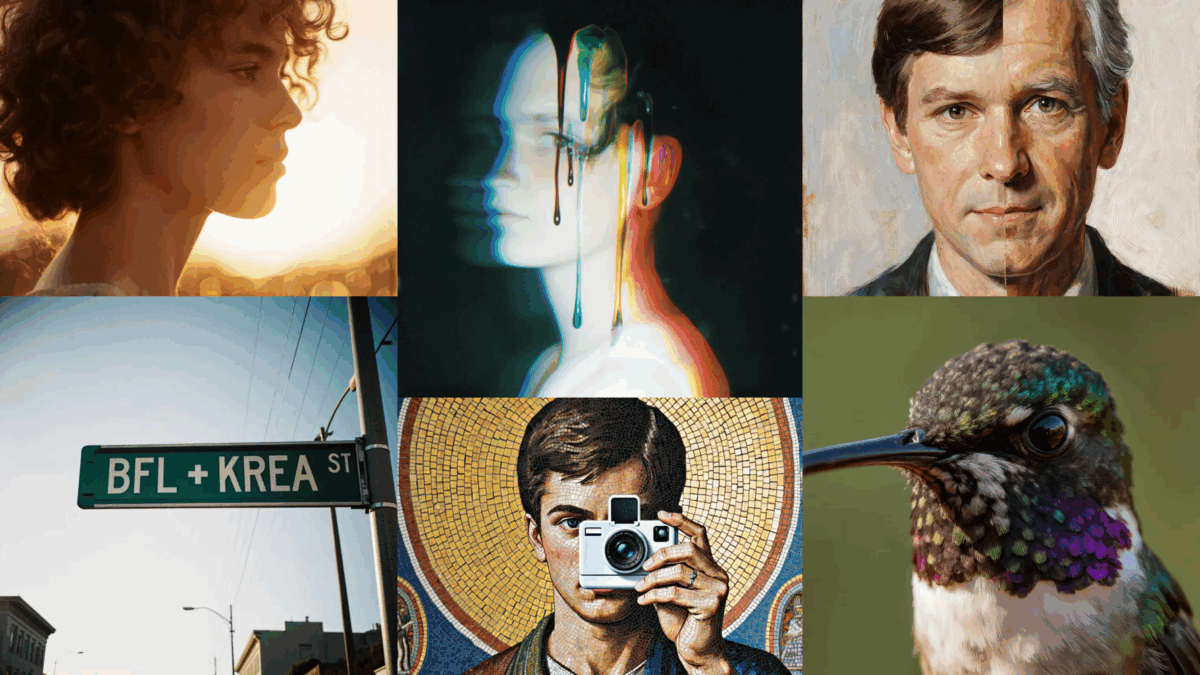

BFL and Krea release FLUX.1 Krea: Open image model designed for realism

Black Forest Labs and Krea AI have released FLUX.1 Krea [dev], an open text-to-image model designed to generate more realistic images with fewer of the exaggerated, AI-typical textures.<br /> Th [...]

Kling 2.6 adds voice control and motion upgrades as AI video tools race toward realism

Few areas have seen as much AI progress this year as video generation. Chinese company Kuaishou is closing out the year with new features for Kling 2.6: a technically impressive update that shows just [...]

Luma AI launches Uni-1, a model that outscores Google and OpenAI while costing up to 30 percent less

The AI image generation market has had an uncontested leader for months. Google's Nano Banana family of models has set the standard for quality, speed, and commercial adoption, while competitors [...]