Rating

Innovation

Pricing

Technology

Usability

We have discovered similar tools to what you are looking for. Check out our suggestions for similar AI tools.

Databricks built a RAG agent it says can handle every kind of enterprise search

Most enterprise RAG pipelines are optimized for one search behavior. They fail silently on the others. A model trained to synthesize cross-document reports handles constraint-driven entity search poor [...]

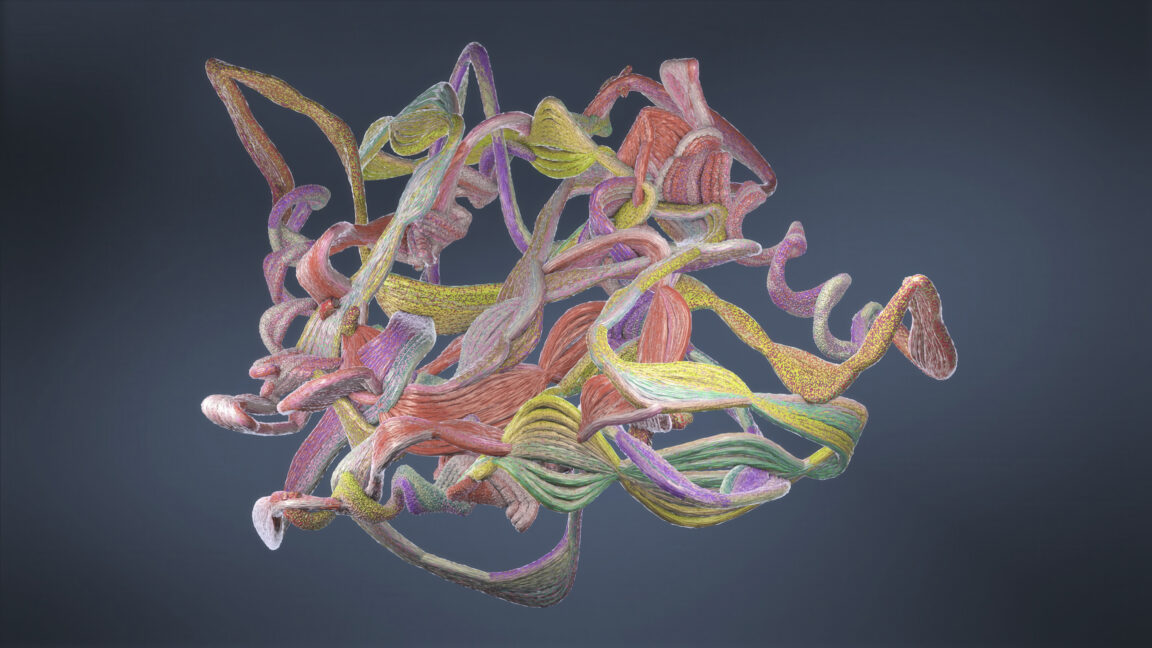

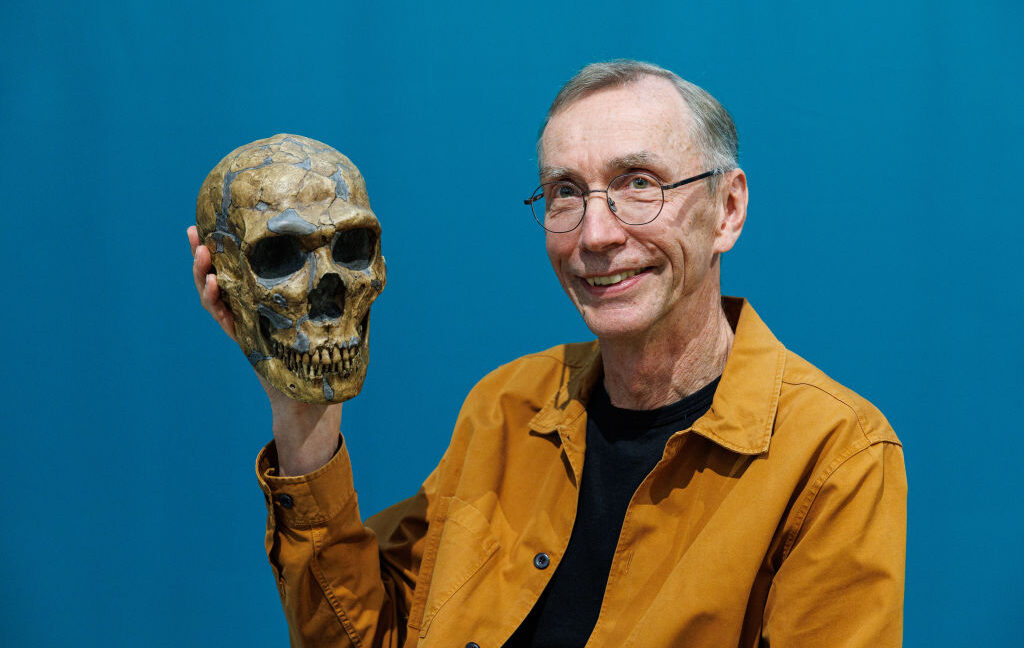

Scientific AI models trained on different data are learning the same internal picture of matter, study finds

MIT researchers compared 59 scientific AI models and found they converge to similar internal representations of molecules, materials, and proteins, even when built on different architectures and train [...]

New 'Markovian Thinking' technique unlocks a path to million-token AI reasoning

Researchers at Mila have proposed a new technique that makes large language models (LLMs) vastly more efficient when performing complex reasoning. Called Markovian Thinking, the approach allows LLMs t [...]

Nvidia researchers boost LLMs reasoning skills by getting them to 'think' during pre-training

Researchers at Nvidia have developed a new technique that flips the script on how large language models (LLMs) learn to reason. The method, called reinforcement learning pre-training (RLP), integrates [...]

Nous Research's NousCoder-14B is an open-source coding model landing right in the Claude Code moment

Nous Research, the open-source artificial intelligence startup backed by crypto venture firm Paradigm, released a new competitive programming model on Monday that it says matches or exceeds several la [...]

Arcee aims to reboot U.S. open source AI with new Trinity models released under Apache 2.0

For much of 2025, the frontier of open-weight language models has been defined not in Silicon Valley or New York City, but in Beijing and Hangzhou.Chinese research labs including Alibaba's Qwen, [...]