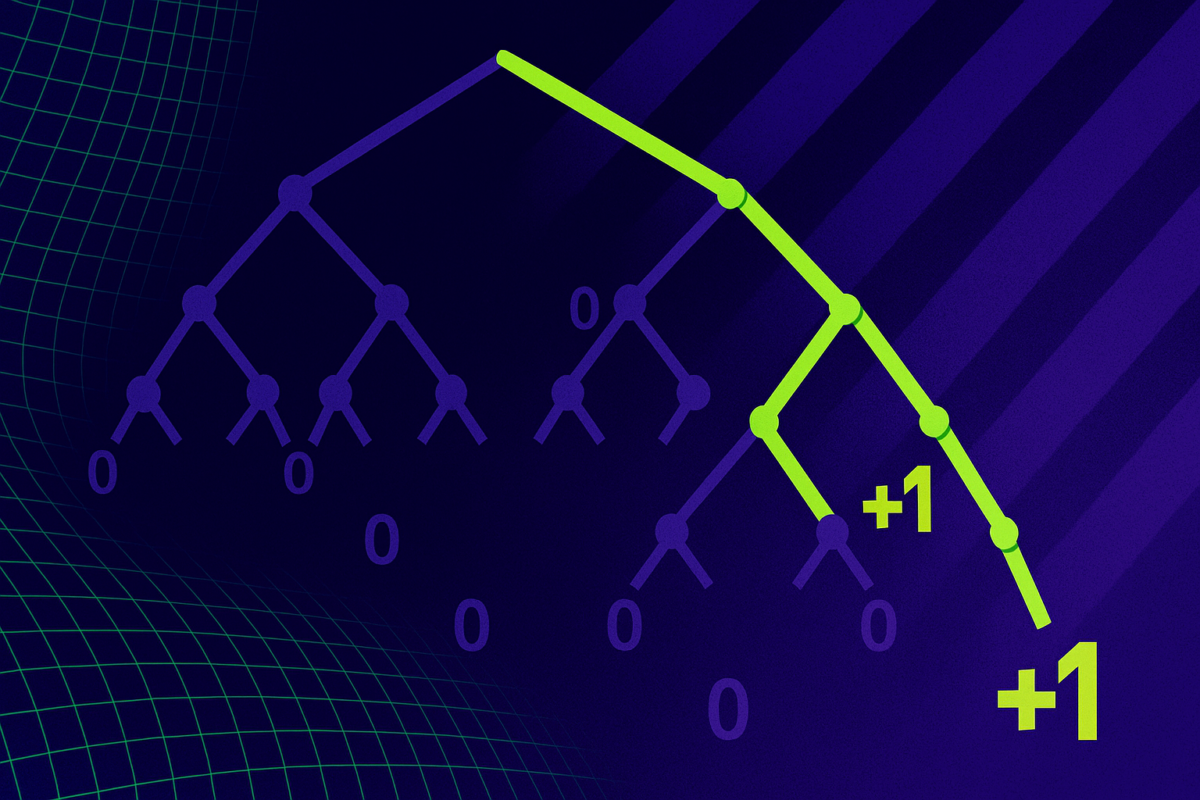

Yet another study finds that overloading LLMs with information leads to worse results

Large language models are supposed to handle millions of tokens - the fragments of words and characters that make up their inputs - at once. But the longer the context, the worse their performance gets.<br /> The article Yet another study finds that overloading LLMs with information leads to worse results appeared first on THE DECODER. [...]

Rating

Innovation

Pricing

Technology

Usability

We have discovered similar tools to what you are looking for. Check out our suggestions for similar AI tools.

ACE prevents context collapse with ‘evolving playbooks’ for self-improving AI agents

A new framework from Stanford University and SambaNova addresses a critical challenge in building robust AI agents: context engineering. Called Agentic Context Engineering (ACE), the framework automat [...]

Lean4: How the theorem prover works and why it's the new competitive edge in AI

Large language models (LLMs) have astounded the world with their capabilities, yet they remain plagued by unpredictability and hallucinations – confidently outputting incorrect information. In high- [...]

GAM takes aim at “context rot”: A dual-agent memory architecture that outperforms long-context LLMs

For all their superhuman power, today’s AI models suffer from a surprisingly human flaw: They forget. Give an AI assistant a sprawling conversation, a multi-step reasoning task or a project spanning [...]

LLMs struggle with clinical reasoning and are just matching patterns, study finds

A new study in JAMA Network Open raises fresh doubts about whether large language models (LLMs) can actually reason through medical cases or if they're just matching patterns they've seen be [...]

So-called reasoning models are more efficient but not more capable than regular LLMs, study finds

A new study from Tsinghua University and Shanghai Jiao Tong University examines whether reinforcement learning with verifiable rewards (RLVR) helps large language models reason better—or simply make [...]

Gong study: Sales teams using AI generate 77% more revenue per rep

The debate over whether artificial intelligence belongs in the corporate boardroom appears to be over — at least for the people responsible for generating revenue.Seven in ten enterprise revenue lea [...]

Self-improving language models are becoming reality with MIT's updated SEAL technique

Researchers at the Massachusetts Institute of Technology (MIT) are gaining renewed attention for developing and open sourcing a technique that allows large language models (LLMs) — like those underp [...]